What is a Determinant?

Sunday, February 12th, 2012I was watching Gilbert Strang’s 18th lecture in 18.06 Linear Algebra a couple of days ago, and he laid out a theory of determinants that started from a few basic properties and derived all the usual results. However he provided essentially no motivation for what he was doing. Why these properties? How did any one ever think of these particular axioms? And more tellingly, what is a determinant, really? I don’t mean the official definition (here quoted from Wikipedia and similar to Strang’s):

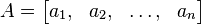

If we write an n-by-n matrix in terms of its column vectors

where the aj are vectors of size n, then the determinant of A is defined so that

where b and c are scalars, v is any vector of size n and I is the identity matrix of size n. These properties state that the determinant is an alternating multilinear function of the columns, and they suffice to uniquely calculate the determinant of any square matrix. Provided the underlying scalars form a field (more generally, a commutative ring with unity), the definition below shows that such a function exists, and it can be shown to be unique.

I can follow the derivation from that, but it doesn’t really explain what a determinant is. And the only alternative I could find in Wikipedia or the readily available textbooks, was that it’s the volume of a parallelepiped of the matrix formed by the vectors representing the parallelepiped’s sides. Again, that feels like a derived property, not a true definition. However, Mathworld, did give me one big hint:

For example, eliminating x, y, and z from the equations

= 0

= 0

= 0

gives the expression

which is called the determinant for this system of equation.

So here’s the answer: the determinant is the condition under which a set of linear equations has a non-trivial null space. Or, more simply, the determinant is the condition on the coefficients a, b, c… of a set of n linear equations in n unknowns such that they can be solved for the right hand side (0, 0, 0, …0) where at least one of the unknowns (x, y, …) is not zero. Let me prove that:

(more…)